Course homepage for Optimisation Algorithms in Statistics I (Ph.D. course 2020; 4 HEC)

SummaryOptimisation (computation of minima or maxima) is frequently needed in statistics. Maximum likelihood estimates, optimal experimental designs, risk minimization in decision theoretic models are examples where solutions of optimisation problems usually do not have a closed form but need to be computed numerically with an algorithm. Moreover, the field of machine learning depends on optimisation and has new demands on algorithms for computation of minima and maxima.

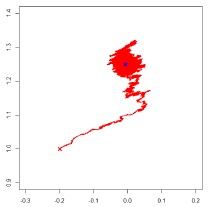

In this course, we will start with discussing properties of gradient based algorithms like the Newton method and the gradient descent method. We will then look in developments especially triggered by machine learning and discuss stochastic gradient based methods. Recent developments recognised the value of gradient free algorithms and we will consider so-called metaheuristic algorithms, e.g. particle swarm optimisation. The last topic of the course will deal with handling of restrictions during optimisation like equality and inequality restrictions.

We will implement these algorithms in R. Examples from machine learning and optimal design will illustrate the methods.

Most welcome to the course!

Frank Miller, Department of Statistics, Stockholm University

frank.miller@stat.su.se

Lectures: October 2; Time 10-12, 13-15 (registered participants received link to all lectures).

Reading:

- Givens GH, Hoeting JA (2013). Computational Statistics, 2nd edition. John Wiley & Sons, Inc., Hoboken, New Jersey. Chapter 2 until 2.2.3 (and Chaper 1.1-1.4 if needed).

- Goodfellow I, Bengio Y, Courville A (2016). Deep Learning. MIT Press, http://www.deeplearningbook.org. Chapter 4.3 (and parts of Chapter 2 and Chapter 4.2 if needed).

- Sun S, Cao Z, Zhu H, Zhao J (2019). A survey of optimization methods from a machine learning perspective, https://arxiv.org/pdf/1906.06821.pdf. Section I, II, IIIA1, IIIB1-2.

- AlphaOpt (2017). Introduction To Optimization: Gradient Based Algorithms, Youtube video (very elementary introduction of concepts).

- About analytical optimisation. (Frank Miller, September 2020)

- Understanding gradient descent. (Eli Bendersky, August 2016)

- Bisection method. (Frank Miller, March 2020; 4min video)

Example code: steepestascent.r

Assignment 1 (Deadline October 12).

Topic 2: Stochastic gradient based algorithms

Topic 2: Stochastic gradient based algorithms

Lecture: October 13; Time: 9-12 (Zoom).

Reading:

- Goodfellow I, Bengio Y, Courville A (2016). Deep Learning. MIT Press, http://www.deeplearningbook.org. Chapter 5.9 and 8.1 to 8.6 (and other parts of Chapter 5 based on interest).

- Sun S, Cao Z, Zhu H, Zhao J (2019). A survey of optimization methods from a machine learning perspective, https://arxiv.org/pdf/1906.06821.pdf. Section IIIA2-6, IIIB3 (other sections upon interest).

- Ruder S (2016). An overview of gradient descent optimization algorithms. https://arxiv.org/pdf/1609.04747.pdf

- Goh G (2017). Why momentum really works. Distill. https://distill.pub/2017/momentum/ (includes a derivation of the optimal step size in steepest ascent, and optimal parameters for momentum method).

- Mitliagkas I (2018/2019). IFT 6085 - Lecture 5; Accelerated Methods - Polyak's Momentum (Heavy Ball Method). http://mitliagkas.github.io/ift6085-2019/ift-6085-lecture-5-notes.pdf (includes a simplified derivation of the optimal step size in steepest ascent, and optimal parameters in Polyak's momentum).

- Mitliagkas I (2018/2019). IFT 6085 - Lecture 6; Nesterov's Momentum, Stochastic Gradient Descent. http://mitliagkas.github.io/ift6085-2019/ift-6085-lecture-6-notes.pdf (Example for non-convergence of Polyak's momentum method and description of Nesterov's momentum method).

About the solution of Problem 1.3.

Assignment 2 (Deadline October 22).

Dataset logist.txt.

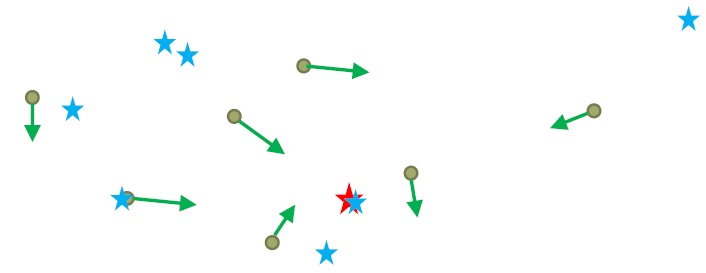

Topic 3: Gradient free algorithms

Topic 3: Gradient free algorithms

Lecture: October 23; Time 9-12 (Zoom).

Reading:

- Givens GH, Hoeting JA (2013). Computational Statistics, 2nd edition. John Wiley & Sons, Inc., Hoboken, New Jersey. Chapter 2.2.4 and 3.1 to 3.4.

- AlphaOpt (2017). Introduction To Optimization: Gradient Free Algorithms (1/2) - Genetic - Particle Swarm, Youtube video (elementary introduction of concepts).

- AlphaOpt (2017). Introduction To Optimization: Gradient Free Algorithms (2/2) Simulated Annealing, Nelder-Mead, Youtube video (elementary introduction of concepts).

- Clerc M (2012). Standard Particle Swarm Optimisation. 15 pages. https://hal.archives-ouvertes.fr/hal-00764996 (some background to details in implementation including choice of parameter values).

- Wang D, Tan D, Liu L (2018). Particle swarm optimization algorithm: an overview. Soft Comput 22:387-408. (Broad overview over research about PSO since invention).

- Goodfellow I, Bengio Y, Courville A (2016). Deep Learning. MIT Press, http://www.deeplearningbook.org. Chapter 5.2 and 7.1 (about regularisation).

Function g in Problem 3.1: bimodal.r.

Assignment 3 (Deadline November 5).

Dataset cressdata.txt.

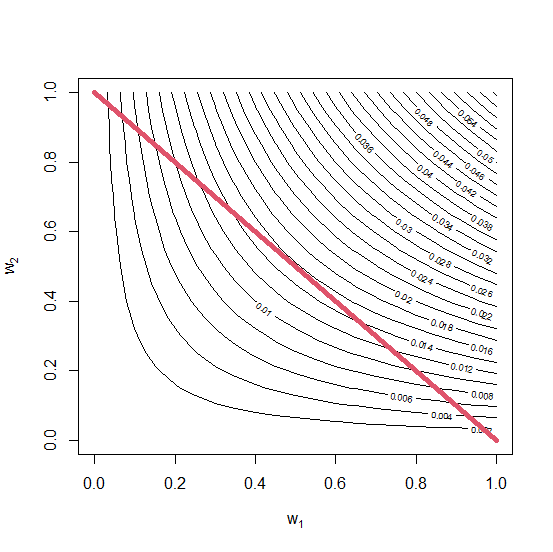

Topic 4: Optimisation with restrictions

Topic 4: Optimisation with restrictions

Lecture: November 6, Time 9-12 (Zoom)

Reading:

- Goodfellow I, Bengio Y, Courville A (2016). Deep Learning. MIT Press, http://www.deeplearningbook.org. Chapter 4.4-4.5 and 7.2.

- Lange K (2010). Numerical Analysis for Statisticians. Springer, New York. Chapter 11.3-11.4 (Optimisation with equality and inequality constraints) and Chapter 16.1-16.3 and 16.5 (Barrier method, model selection and the lasso).

Program for D-optimal design for quadratic regression in Lecture 4 Dopt_design.r.

Assignment 4 (Deadline November 27).

Final assignment

Final assignment

Assignment 5 (Deadline November 27).

Prerequisites

The course is intended for Ph.D. students from Statistics or a related field (e.g. Mathematical Statistics, Engineering Science, Quantitative Finance, Computer Science). Previous knowledge in the following is expected:

- familiarity with R (or another programming language with similar possibilities),

- basic knowledge in multivariate calculus (e.g. from a Multivariate Statistics course),

- statistical inference (e.g. from a Master's level course).

The course is graded Pass or Fail. Examination is through individual home assignments on problems for each topic and through a concluding mini project.

Course literatureWe will not use a central course book. Several articles, book chapters and other learning resources will be recommended.

Course structureThe course is divided into four topics. The topics will be discussed during online meetings with Zoom. Course participants will spend most of their study time by solving the problem sets for each topic on their own computers without supervision. The course will be held in October and November 2020.

A second part of the course with a deeper focus on theoretical foundations (Optimisation algorithms in statistics II, 3.5 HEC) will be offered in Spring 2021.

Course schedule- Topic 1: Gradient based algorithms

Lectures: October 2; Time 10-12, 13-15 (online, Zoom) - Topic 2: Stochastic gradient based algorithms

Lecture: October 13; Time: 9-12 (online, Zoom) - Topic 3: Gradient free algorithms

Lecture: October 23; Time 9-12 (online, Zoom) - Topic 4: Optimisation with restrictions

Lecture: November 6, Time 9-12 (online, Zoom)

To register for the course, please send an email to me (frank.miller at stat.su.se) until September 18, 2020. You are also welcome for any questions related to the course.